KEEP UP WITH OUR DAILY AND WEEKLY NEWSLETTERS

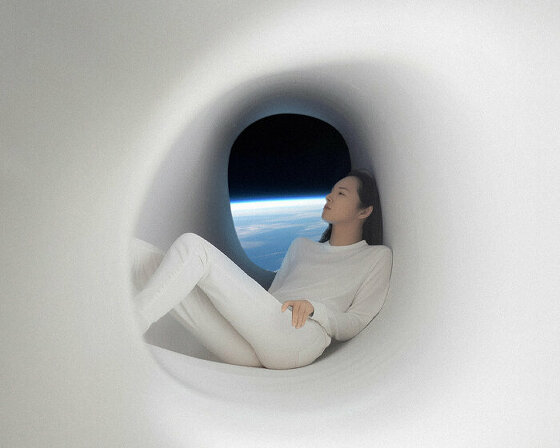

happening now! at milan design week 2024, samsung creates a world where the boundaries between the physical and digital realms blur.

PRODUCT LIBRARY

BMW releases the upgraded vision neue klasse X, with a series of new technologies and materials especially tailored for the upcoming electric smart car.

following the unveiling at frieze LA 2024, designboom took a closer look at how the color-changing BMW i5 flow NOSTOKANA was created.

connections: +630

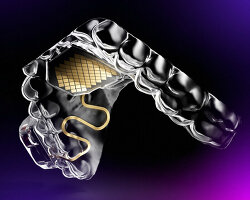

each unit draws inspiration from emergence, featuring a hexahedron-based structure that facilitates integration into larger systems.

connections: 96

brian eno revives his color-changing neon turntable for the second time, on display too at paul stolper gallery in london until march 9th, 2024.

connections: +380